As it stands, I don't think that Effective Altruism (EA) fully describes my ethical values, let alone my other personal values. So, I wanted to write something that does fully describe my values. If you don't know what Effective Altruism is, here is my (relatively) short explanation that I think covers everything you need to know.

I think that the explanations on the EA website and 80,000 hours website are not that great. I learn the best from lots of bullet points that give me lots of condensed information, with nested links that allow me to gather more information when needed. If this doesn't stick with you, you can always go read the intros at either of these websites!

*UPDATE* 8/31/2023:

I have had some minor changes in my beliefs. I debated writing a whole new post, and maybe I still will and delete these edits. I also have still not decided if I’m gonna delete old information, or just write *EDIT* or *UPDATE* after each new belief. I think it’s kinda important to see why some of my beliefs have changed, but I don’t want this to be unnecessarily long and meandering. I’ll decide in a moment. I just decided to go ahead and add the edits below, in addition to my previous writings.

*END UPDATE*

Unofficial Official Tenets of Effective Altruism (EA)

*UPDATE* 8/31/2023:

I wanted to include some sort of forward before going into these issues.

Effective Altruism was created by a group of overwhelmingly white, upper/upper middle class, British men, coming out of primarily the University of Oxford, an extremely prestigious university.

Due to the inherent biases of the group of such homogenous people, despite their fierce undertakings in fighting cognitive and social biases, there will be messaging that sounds partial to a white, male, upper class audience, or only reflects the realities of white, male, upper class men.

The philosophical beliefs they cultivated were largely aimed at an audience of people coming from similarly prestigious universities, or at least, people similarly as intelligent.

One of the issues with trying to accomplish any great undertaking, whether that is social impact, business, science, philosophy, etc. is that things can often be bottlenecked by intelligence, or at least appear that way. For example, people often quit trying to become doctors because they don’t feel intelligent enough. While one of these bottlenecks can be intelligence, its often a combination of many issues, like gender and race discrimination, poverty, low parental support, place of birth, luck, etc.

Effective Altruism messaging was spread, at least initially, almost exclusively through prestigious universities in the UK and US. As such, many of the people in the movement are extremely intelligent, and the average person will assume you graduated from a prestigious university and have some baseline intelligence. It also means that the economic background of individuals is quite high—possibly higher than the average economic background of students at my university.

My personal messaging, in great part, is also going to be directed to this audience, as people reading this post will mostly be people from my university, or people in Effective Altruism.

It might feel quite alienating, scary, and bad to read ideas that come off as: “your impact is only worth something if you’re working in this field on this problem” or “this field, biosecurity, requires you to have a PhD in Biology”. To a student at a prestigious university from a wealthy background, these might sound like reasonable things to discuss. To others, it can feel quite bad.

I’m the first person in my family to attend university and grew up low income. I would never talk to an audience of people like my parents and go, “well it’s never too late to think about going to university so you can go change the world!!”. It’s incredibly tone deaf, insensitive, and unlikely to change anyone’s mind. Parents trying to raise children on low incomes have more than enough to worry about, without trying to worry about their social impact on top of everything else.

However, that is not at all to say that these ideas can’t be useful to you if you don’t have a degree from x school, or if you don’t even have a degree at all. Perhaps you don’t feel very intelligent, or think you know you’re not very intelligent. There are an incredible number of ways to have social impact, even in some of the areas I talked about, without working in some super intellectually taxing field.

For example, in Animal Welfare, much of the work involves organizing volunteers, facilitating conversations between researchers and policy makers, communicating ideas in a compassionate manner, and maintaining donor communication. Some of the most prolific researchers could be 2 or more times impactful if they had a trusted personal assistant to write emails, run errands, manage their calendar, keep them on schedule, etc. Some of the greatest interventions happen purely from being at the right place at the right time, like a bartender being in the ear of a congressperson.

But you also shouldn’t count yourself out! If you’re 40 years old, thinking about possibly attending university for the first time with a Biology PhD in sight, it might be seriously worth considering if it’s the right path for you.

If you’re interested in positive social impact, there are many ways to make incredible differences in the world, no matter what your background is. By thinking about it in the first place, you’re near guaranteed to increase your impact.

*END UPDATE*

Impartial concern for welfare

People matter regardless of their race, ethnicity, gender, nationality, sexual orientation, ability, socioeconomic status, age, interests, language, religion, profession, lifestyle, culture, experiences, etc.

This is all normal stuff. Ideally, a "good person" also agrees here.

People ALSO matter regardless of where they live.

Someone suffering further away from you is just as morally relevant as someone suffering in front of you. This is not immediately obvious to most people.

Here is the classic Drowning Child Argument by Peter Singer, with accompanying images by Lena Ashooh.

The lives of animals matter, because we care about beings based on their capacity to suffer, not their intelligence or general ability. To quote Jeremy Bentham:

The question is not, Can they reason?, nor Can they talk? but, Can they suffer? Why should the law refuse its protection to any sensitive being?

Prioritization

If we want to do good, we have to prioritize problems that are more pressing than others. Ex. climate change might deserve more attention than mandating personal finance courses in high school. Personal finance courses are still important and they might still deserve money or resources, but because we live in a world where we have scarcity, we have to allocate our resources (money, people, time) as effectively as we can.

Within climate change, we could also say that climate policy might deserve more attention than carbon recapture. Again, this doesn't make something like carbon recapture any less important, it just means climate policy is better at affecting change. (To be clear, I have no opinion on which intervention is better.)

EAs argue that you can actually use scientific inquiry to understand what problems are more pressing. This is the main idea behind Global Priorities Research (GPR). Most of the time when we try to do good, the ways we prioritize are based entirely on priors and opinions we hold, instead of evidence-backed approaches.

If you don't intentionally prioritize, you still end up unintentionally prioritizing. That's how most of our world is run today. But we live in a world where $5,000 can save a child's life, we can't afford to be inefficient.

This pretty much looks like utilitarianism with some caveats.

Utilitarianism is a normative ethics class under consequentialism that says an action is right if it leads to the most happiness (utility) for the greatest number of people. tldr; do the thing that leads to the greatest outcome generated.

Most EA intro material doesn't explain utilitarianism or normative ethics at all, even though it's one of the primary things EAs talk about. If you don't understand, you'll be quite out of the loop.

To get rid of jargon, it's easier to explain what the three primary classes of normative ethics are, and then to explain consequentialism and then utilitarianism.

*UPDATE* 8/31/23:

I don’t think all this information is that relevant necessarily for understanding EA. I think you just need to understand prioritization. I’ve had some people tell me the utilitarianism explanations are slightly confusing. It’s kind of confusing stuff. Maybe I’ll go back and try to make it less confusing at some point, but for now, I honestly think it’s less confusing than most (all?) information on the internet. If you have questions, you can always reach out to me and I’ll do my best to deliver a better explanation. I *do* think that this section is somewhat important for understanding my personal beliefs though.

*END UPDATE*

The main classes of normative ethics are:

Deontological Ethics aka Deontology: Rights and wrongs are determined by a set of rules or principles. Ex. do not lie, do not murder, obey your parents.

Act only in accordance with that maxim through which you can at the same time will that it become a universal law.

So act that you use humanity, whether in your own person or in the person of any other, always at the same time as an end, never merely as a means.

Virtue Ethics: Rights and wrongs are determined by a set of virtues. Ex. be a good person.

Faith, hope, charity, prudence, fortitude, temperance, justice (Catholic virtues)

Courage, temperance, liberality, magnificence, magnanimity, proper ambition, patience, truthfulness, wittiness, friendliness, modesty, righteous indignation (Aristotle's virtues)

Temperance, silence, order, resolution, frugality, industry, sincerity, justice, moderation, cleanliness, tranquility, chastity, humility (Ben Franklin's virtues)

Trustworthy, loyal, helpful, friendly, courteous, kind, obedient, cheerful, thrifty, brave, clean reverent (Boy Scouts virtues)

Consequentialism: The consequences of someone's actions form the ultimate basis of the rightness or wrongness of that action.

I decided to split up the Utilitarianism section because it was going to get really ugly, really fast, in nested bullets

Utilitarianism

Do the thing that brings about the most utility (aka wellbeing aka pleasure aka happiness). Ex. switch the train tracks on the trolley problem to kill 1 to save 5.

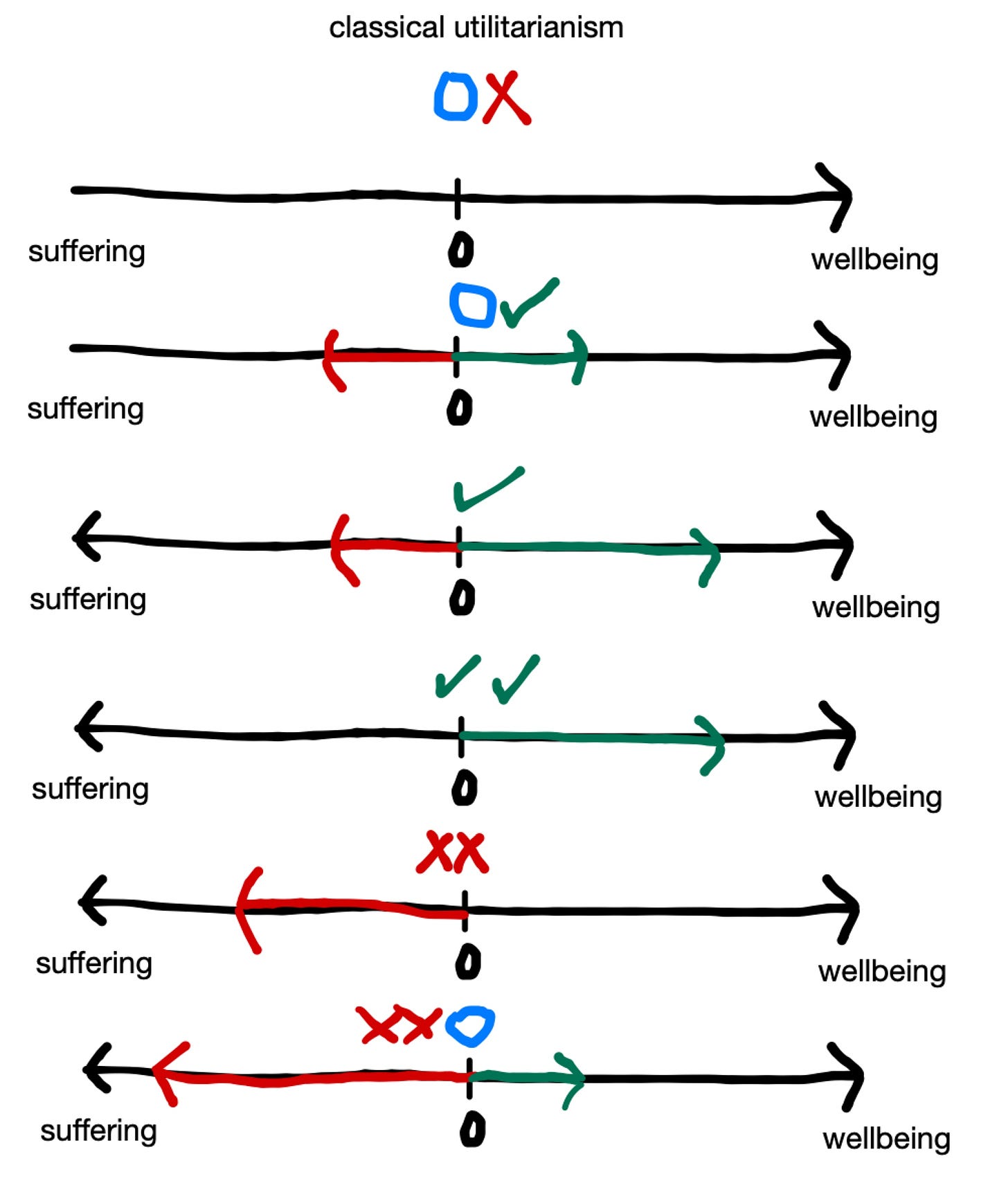

To be clear, blue ◯ on these diagrams means neutral, red X means bad, and green checkmark ✓ means good.

Classical "Hedonic" Utilitarianism (the one described above)

No beings existing, even though conceivably neutral, is considered bad by most utilitarians. Beings existing, but in a neutral state, is much closer to neutral.

An equal amount of beings existing in suffering and wellbeing is considered neutral or neutral-good by most utilitarians, because they think this state is considered better than nonexistence.

More wellbeing than suffering means good. The more wellbeing and the less suffering you get, that's even better.

If all beings were suffering in the universe, with no hope for change, I think utilitarians universally agree that this is really bad. But if the utility function was equal, with more suffering but also some amount of wellbeing, I think most utilitarians consider this to be neutral-bad. I imagine they'd think similarly about a world where there is only suffering but there is a significant probability that things get better.

See the utility function of our world today, which is likely net negative if you treat the moral weight of all beings which have the capacity to suffer to be the same, but most utilitarians I know still treat this world to be much better than nonexistence.

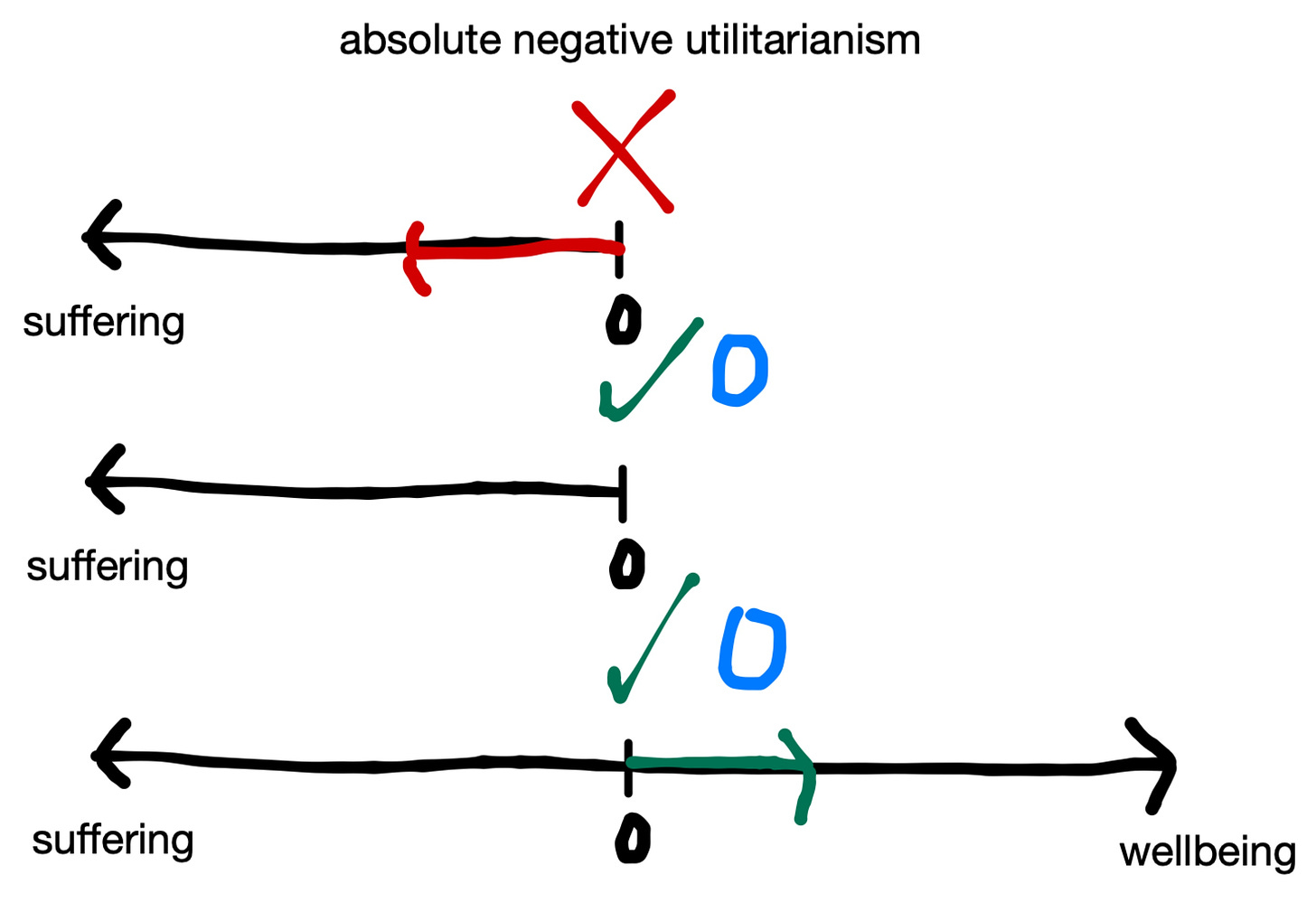

Absolute Negative Utilitarianism: do the thing that causes the most reduction of total negative utility (aka suffering). Generating wellbeing is neutral. Absolute NUs (Negative Utilitarians) are completely indifferent to happiness.

For a longer explanation of how that's even possible, see here by David Pearce.

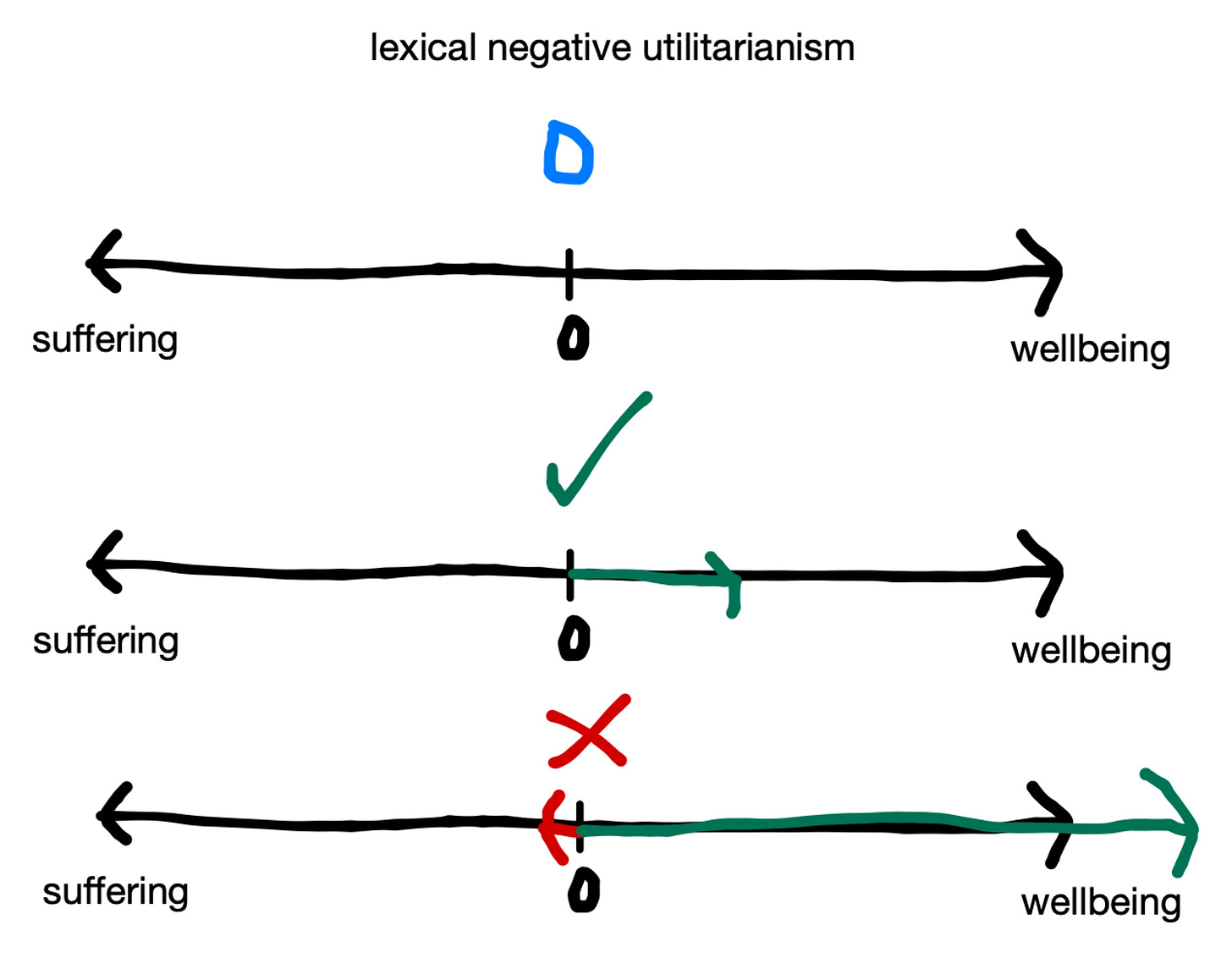

Lexical Negative Utilitarianism: Suffering and happiness both count, but no level of happiness can compensate for any level of suffering (no matter how small).

Lexical-threshold Negative Utilitarianism: Suffering and happiness both count, and some suffering can be traded with (probably much more) happiness, but there is some amount of suffering that no happiness can outweigh.

If you're a regular utilitarian (classical or negative), stopping 10^ googol (or whatever the number is) people from stubbing their toe is equally as valuable as stopping one minute in a brazen bull. If there's a lexical threshold, then stopping the suffering of the brazen bull is infinitely more important.

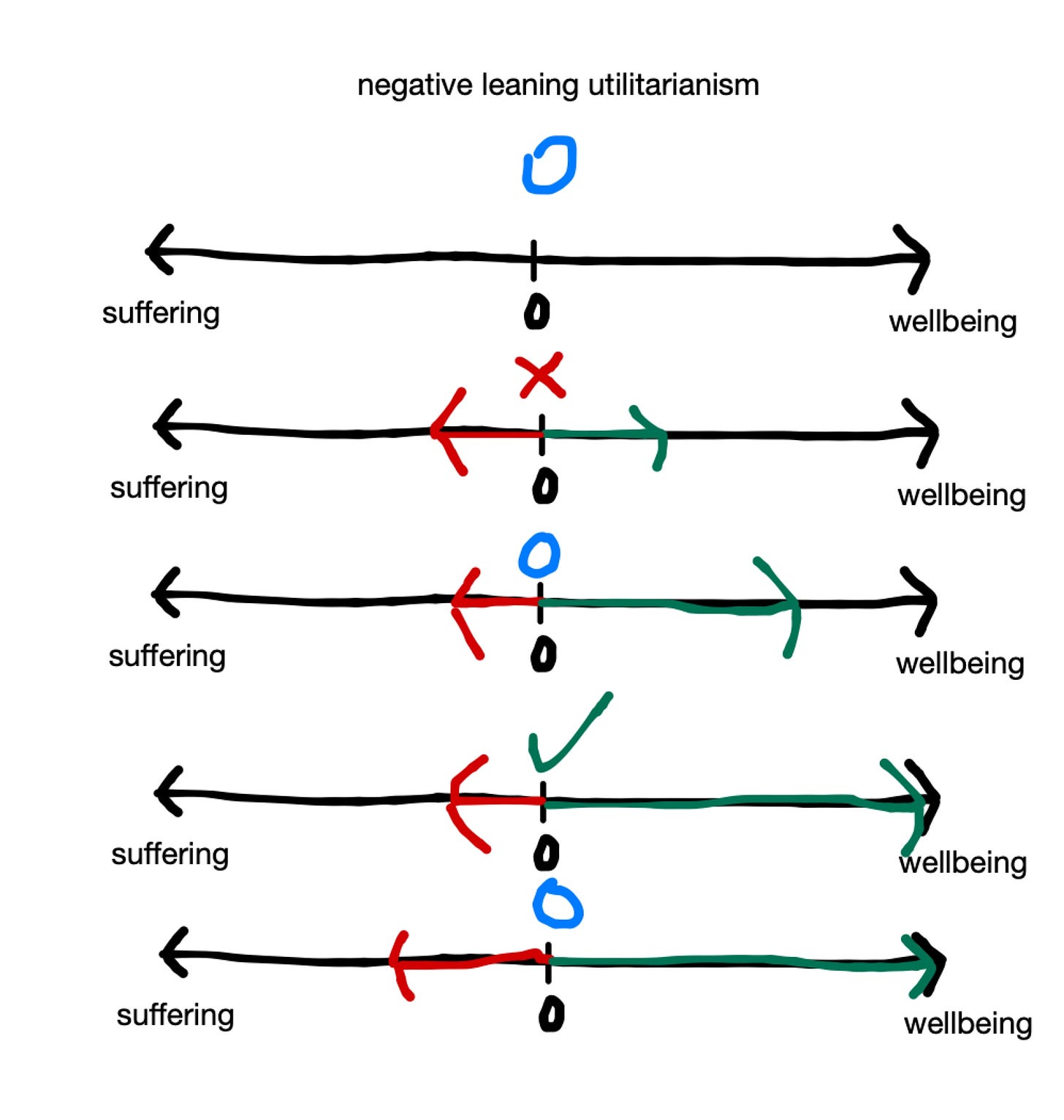

Negative-leaning Utilitarianism: suffering deserves vastly greater weight than happiness. For instance, one minute in a brazen bull might require millions of years of happy life to outweigh morally. So generating wellbeing has SOME value greater than zero.

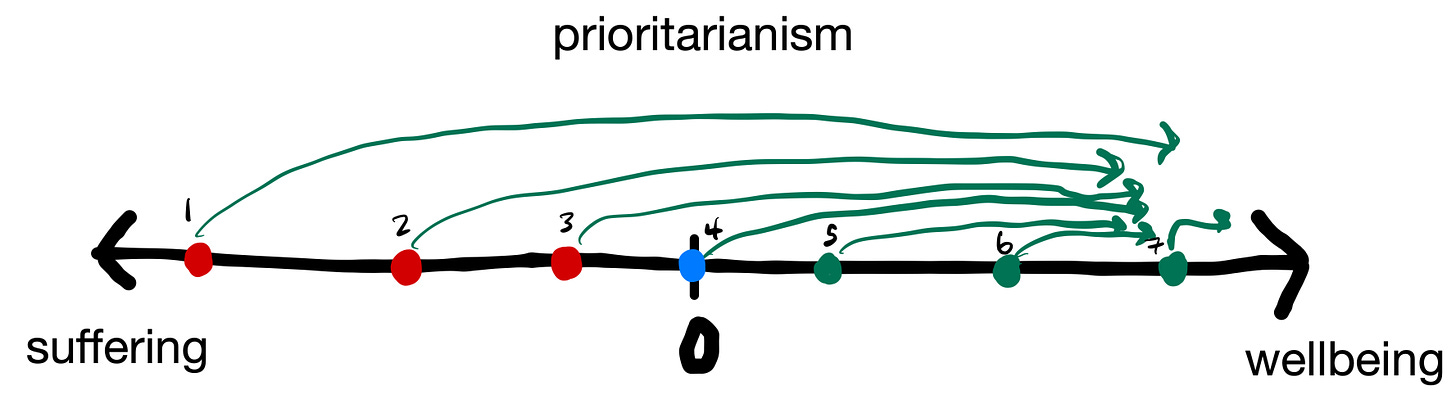

Prioritarianism: prioritize the reduction of suffering for the worst off first.

Prioritarians differ on how much order matters. They might only give some extra weight, instead of all moral weight, to those worse off. Negative Prioritarians care little to none about making those with small amounts of wellbeing have more wellbeing, until those who suffer have passed them up (think of it as utility leapfrog).

See here by Animal Ethics for a more thorough investigation.

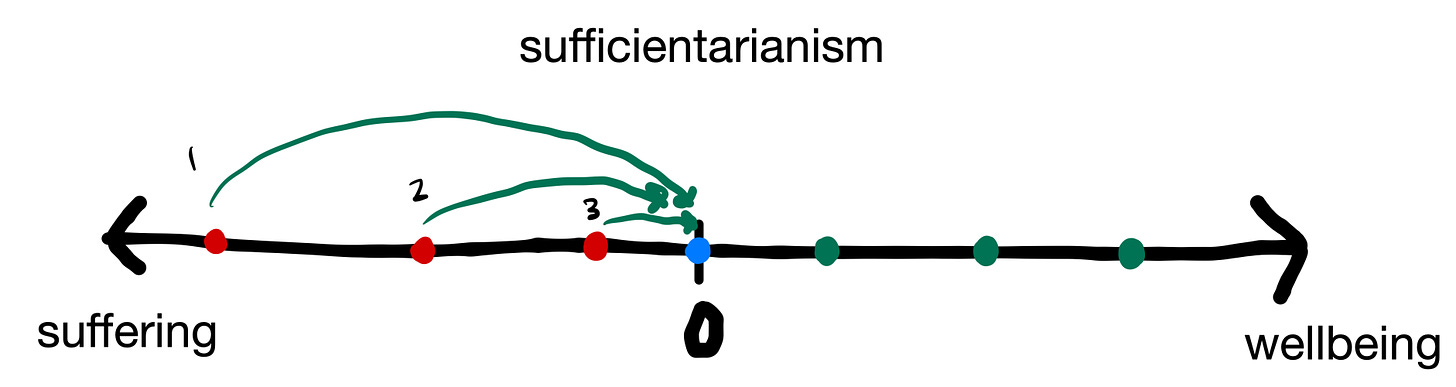

Sufficientarianism: prioritize the reduction of suffering for the worst off, and be agnostic about how wellbeing is distributed.

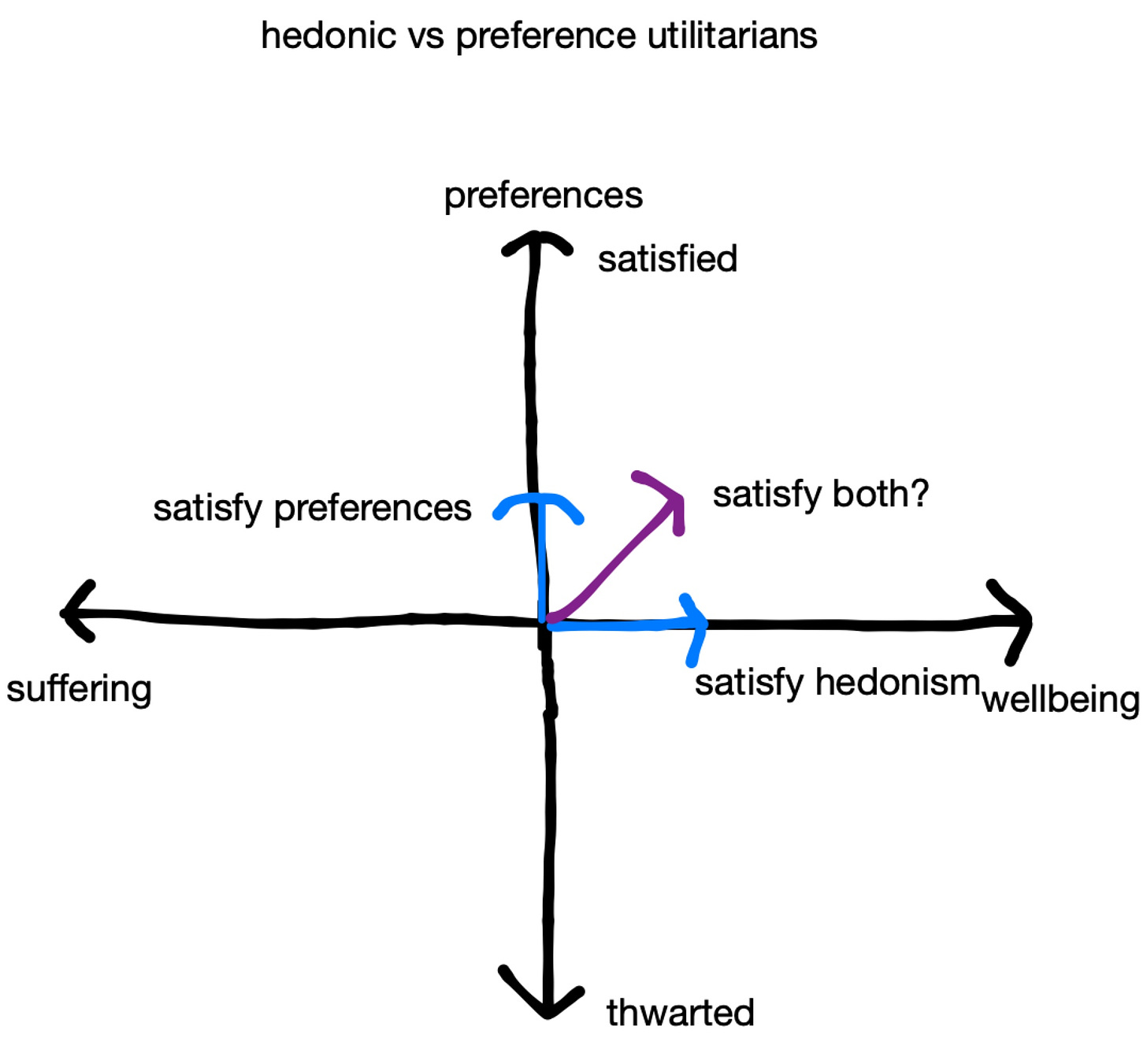

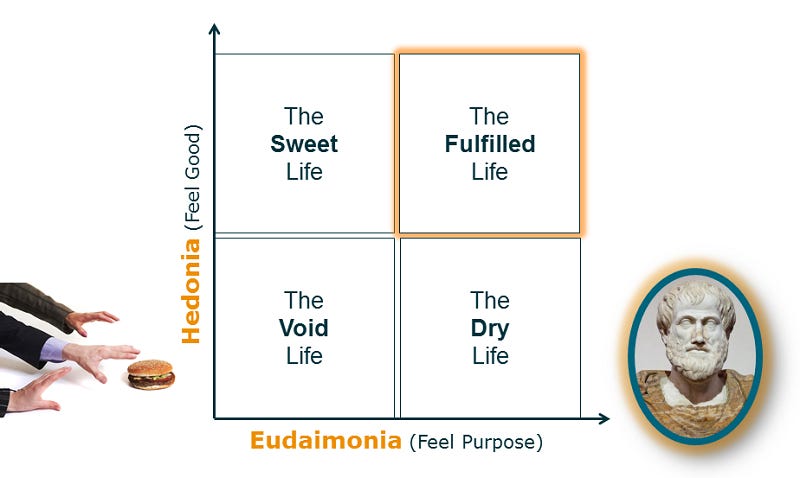

Preference Utilitarianism: do the thing that fulfills the most individuals' personal interior preferences, which may or may not be hedonism. See eudaimonia.

An example might be, instead of having everyone wirehead (see The Hedonistic Imperative by David Pearce) in the far future, which might hypothetically be the "best" option, people should have the choice to exist how they'd like, such as pursuing intellectual or artistic goals, or being with their families. Finding purpose. And not only should people have a choice, we should focus our aims on helping them satisfy their preferences, instead of helping people find hedonism.

You could replace wireheading, if you don't understand it, with really good drugs with no negative side effects.

A less "alien" example, is that preference utilitarians are interested in helping people find purpose, instead of helping them find happiness.

Average vs Total Utilitarianism: should you increase the average amount of happiness beings are experiencing, or the total amount of happiness among all beings?

See the repugnant conclusion, population ethics, and utility monsters.

For further reading on population ethics, see Population Axiology by Hillary Greaves.

Rule vs Act Utilitarianism: should you create a set of rules with which when followed, leads to the greatest amount of utility generated, or evaluate each situation or “act” on a case-by-case basis? Classical Utilitarians are Act Utilitarians by default.

You can mix and match: Negative Preference Utilitarianism, Total Hedonic Utilitarian, etc.

ChatGPT song

(It was very hard to get it to rhyme something with “ethics”. Sorry in advance if you cringe, but I think this is a pretty easy overview of how to remember things!)

Verse 1:

Deontology's all about rules we must obey

Consequentialism looks at the end, what we weigh

Virtue ethics is about being a good soul

All three perspectives, make us whole.

Chorus:

Ethics in our lives, a crucial role play

Helping us navigate what's right and what's astray

Deontology, consequentialism, virtue ethics

All unique, all distinct in their aesthetics.

Verse 2:

Deontology's all about duty and rules

Kant's categorical imperative, it's no fools

Don't lie, don't steal, always tell the truth

Following these rules is the noble proof.

Chorus:

Ethics in our lives, a crucial role play

Helping us navigate what's right and what's astray

Deontology, consequentialism, virtue ethics

All unique, all distinct in their aesthetics.

Verse 3:

Consequentialism's about the end in mind

Maximizing good and leaving bad behind

The principle of utility's what we follow

To ensure the best outcome for all to swallow.

Chorus:

Ethics in our lives, a crucial role play

Helping us navigate what's right and what's astray

Deontology, consequentialism, virtue ethics

All unique, all distinct in their aesthetics.

Verse 4:

Virtue ethics is about being a good person

It's not just rules, it's about the inner vision

Being brave, just, and temperate

Is what it takes to be virtuous, it's great.

Chorus:

Ethics in our lives, a crucial role play

Helping us navigate what's right and what's astray

Deontology, consequentialism, virtue ethics

All unique, all distinct in their aesthetics.

Outro:

Deontology, consequentialism, virtue's way

Three perspectives to guide us through each day

Each with its own unique point of view

Choose the one that resonates with you.

Caveats

The caveats are listed here and here.

tldr; it's almost never OK to do harm in order to do good because:

It might violate rights

It's probably not the best way to maximize utility

Moral uncertainty

There are better, less risky alternatives

tldr; sometimes when trying to do good, you end up doing bad. Don't do that!

How prioritization is applied in EA

The ITN framework

Importance or Scale: How important/pressing is the problem? How many people/beings does it affect? How much suffering is caused?

Tractability or Solvability: Is the problem solvable, or can we at least gain traction on it? Can we solve the problem with money/resources or does it require human capital? If we throw money or people at the problem, does the problem ease up linearly? Does it ease up exponentially? Does it ease up logarithmically? Does it not ease up at all until one day the problem is solved ("hockey stick")?

Neglectedness or Uncrowdedness: How many people are already working on the problem? Does adding more people or resources to the problem help the problem get solved faster? Would fewer people working on the problem actually aid the efficiency process? Could we spend less money more efficiently to better solve the problem? Is there lots of competition in the field?

Cause prioritization. The primary causes prioritized are here, namely (in alphabetical order):

*UPDATE* 8/31/23:

I think I should include some caveats to this list. For one this link here, to the 80,000 Hours website, has things listed in some general order of prioritization, but the issue is much more complicated than that.

It’s pretty difficult to compare cause areas one-to-one. That’s why we use general metrics of utility and try to get together some rough estimates. That means that this prioritization list is subject to someone’s final reasoning at 80,000 Hours. Ex. there are many EAs who care about AI Alignment, but think the chances of Biosecurity concerns happening are negligible. There are fields like Wild Animal Welfare, which are definitely great in scale and highly neglected, but questionably tractable. Some have different opinions on how tractable the issue is, others take issue to how much the welfare of some wild animals, like insects, actually matters (or even facial mites).

Also, there’s a great deal of difference between how impactful you can be in these areas, depending on fit, expertise, and just how much ITN you can actually do. Just like I discussed earlier with Climate Policy vs Carbon Recapture. Even if you pick a cause area that seems to get good ITN numbers, you might be incorrectly apply your effort to something that doesn’t bring a great deal of change.

*END UPDATE*

note: moving on from prioritization to the next section of EA

Expected value and counterfactuals

I went into counterfactualism in depth in my last blog post. tldr; your impact isn't what you actually do in the world, but how the world looks different because you exist. If you didn't exist, the impactful thing you did might've been done by someone else.

This also applies in the negative case, ie your negative impact is only as bad as what would have also been done if you didn't exist.

Expected value: if we think something is 10% likely to occur, and would kill 10 million people if it were to occur, then by working on the problem, you're saving 1 million lives in expectation. Your impact diminishes depending on how many people are working on the issue. We can use expected value calculations in our prioritization structure.

Obviously, this is heavily dependent on how accurate your expected values are! Hence a heavy focus on forecasting in the community.

Longtermism

A further trend from an "Impartial concern for welfare": people that will exist in the future that we can reasonably influence are morally relevant.

The classic example is that old people should care about preventing climate change, even though they're likely to die before its most adverse effects hit, because they should care about the future of society, and they can do something to reasonably affect it.

Longtermists take this a step further though, and think it's likely we can affect the lives of beings thousands of years from now in the present, mainly by working to reduce existential risk (x-risk).

I'll let 80,000 Hours take over the rest (I just copy and pasted from their website). note: this is a very classical utilitarian approach. There are many in EA who also value affecting the longterm future, but care about doing so through a suffering-focused lens.

*UPDATE* 8/31/23:

I actually think most of the list below is weirdly strange and dystopian, even for someone who has been thinking about these things for a while. I also think that the arguments listed on the link above are not very good.

I should have written my own Longtermism list, but I wanted to honestly show what many EAs believe.

My why Longtermism is something like: the amount of suffering, in expectation, that is going to exist in the future (probably the long-term future), is far far far far greater than will exist today. See s-risks. This means, in expectation, the best thing we can do to reduce suffering is to affect the long-term future, today. This probably looks like making sure AI Alignment goes well.

*END UPDATE*

Copy and pasted

Why Longtermism

The Earth could remain habitable for 600-800 million years, so there could be about 21 million future generations, and they could lead great lives, whatever you think “great” consists of. Even if you don’t think future generations matter as much as the present generation, since there could be so many of them, they could still be our key concern.

Civilization could also eventually reach other planets — there are 100 billion planets in the Milky Way alone. So, even if there’s only a small chance of this happening, there could also be dramatically more people per generation than there are today. By reaching other planets, civilization could also last even longer than if we stay on Earth.

If you think it’s good for people to live happier and more flourishing lives, there’s a possibility that technology and social progress will let people have much better and longer lives in the future (including those in the present generation). So, putting these first three points together, there could be many more generations, with far more people, living much better lives. The three dimensions multiply together to give the potential scale of the future.

If what you value is justice and virtue, then the future could be far more just and virtuous than the world today.

If what you value is artistic and intellectual achievement, a far wealthier and bigger civilization could have far greater achievements than our own.

Ways we can impact the future

We can speed up processes that impact the future. Our economy tends to grow every year, and this suggests that if we make society wealthier today, this wealth will compound long into the future, creating a long stream of benefits.

Even more importantly, we could precipitate the end of civilization, perhaps through a nuclear war, run-away climate change, or other disasters. This would foreclose the possibility of all future value. It seems like there are things the current generation can do to increase or decrease the chance of extinction.

There might be other major, irreversible changes besides extinction that we can influence, which could either be positive or negative. For instance, if a totalitarian government came into power that couldn’t be overthrown, the badness of that government would be locked in for a very long-time. If we could decrease the chance of that happening, that would be very good, and vice versa. Alternatively, genetic engineering might make it possible to fundamentally change human values, and then these values would be passed down to every future generation. This could either be very good or very bad, depending on your moral views and how it was done.

Even if you’re not sure how to help the future, then your key aim could be to do research to work it out. We’re uncertain about lots of ways to help people, but that doesn’t mean we shouldn’t try. This is part of global priorities research, and there are plenty of concrete questions to investigate.

End copy and paste

Worth noting some famed papers here:

Astronomical Waste by Nick Bostrom

On the Overwhelming Importance of Shaping the Far Future by Nick Beckstead

The case for strong longtermism by Will MacAskill and Hillary Greaves

Moral uncertainty

We see most of our ancestors as moral monsters, participating in moral atrocities like the enslavement of people, colonization, torture, etc. However, these actions were seen as morally OK, or even righteous by their civilizations (obviously, the victims did not agree). How do we avoid these pitfalls today? And how should we tread knowing it's very likely we'll make decisions that will be regarded as moral atrocities in the future?

Collaboration

We should have a high-trust community so we can collaborate and solve problems together.

Seek the truth

"Rather than starting with a commitment to a certain cause, community, or approach, it’s important to consider many different ways to help and seek to find the best ones. This means putting serious time into deliberation and reflection on one’s beliefs, being constantly open and curious for new evidence and arguments, and being ready to change one’s views quite radically." - from EA website intro

How do EAs operate with all these beliefs in mind?

I said this in the prioritization section, but it mainly looks like working in these areas (the ones listed earlier), especially anything looking to minimize existential risk (x-risk), which is primarily AI Safety and Preventing Bioengineered Pandemics.

This is mainly due to the majority of EAs being total hedonic utilitarians (reference utilitarianism stuff from ealier), and valuing future lives at approximately a one-to-one ratio.

Popular jargon not covered

I was going to go on to generate a list of lots of other jargon, but I don't think it’s really that useful at all beyond what has already been stated. If you're in the community, you'll pick up knowledge over time. Trying to learn all the jargon up front seems like the wrong way to do things.

My philosophical beliefs

Nearly all of these are ethics or ethics-adjacent. I plan on making a new post in about 3 and 9 months to see if these beliefs still hold true.

Primary

Strong Atheism

God or any other supernatural being certainly does not exist. Not even a God that only created and just watches.

*UPDATE* 8/31/2023:

I don’t love saying certainly anymore, it’s entirely non-skeptic and Bayesian of me. I really don’t think God exists, I really truly don’t, and for all intents and purposes I’m still a strong atheist. But for some others, I guess I’m just a soft atheist.

*END UPDATE*

If we live in a simulation, I think of this as different than God.

I recently heard there are many issues with this paper, namely the math involved. I'm sure the argument still holds up (that it's possible to run simulations), so I'll ignore these issues for now. I do find Nick Bostrom's recent apology to be absolutely repugnant though, for what it's worth.

I vaguely agree with Theological Noncognitivism.

I think atheism is the most important first step to take if you're trying to help the world. I'm an antitheist.

*UPDATE* 8/31/2023:

This feels pretty abrasive. The *most* important thing? Idk. There are plenty of atheists and non-religious people who contribute quite negatively towards the world, and many religious people who contribute quite positively.

My issue is that, it’s hard to think like an EA, to think about prioritization and the like, when this stuff might not actually *really* matter according to your religious beliefs. Ex. Christianity doesn’t ask you to work on AI Alignment, or even help the poor (necessarily). It just asks you to follow some commandments, or in some sects, just accept Jesus as your savior. It seems pretty hard to pivot from this belief to one of thinking about social impact as maximally important, although I’m sure there are people that hold joint beliefs.

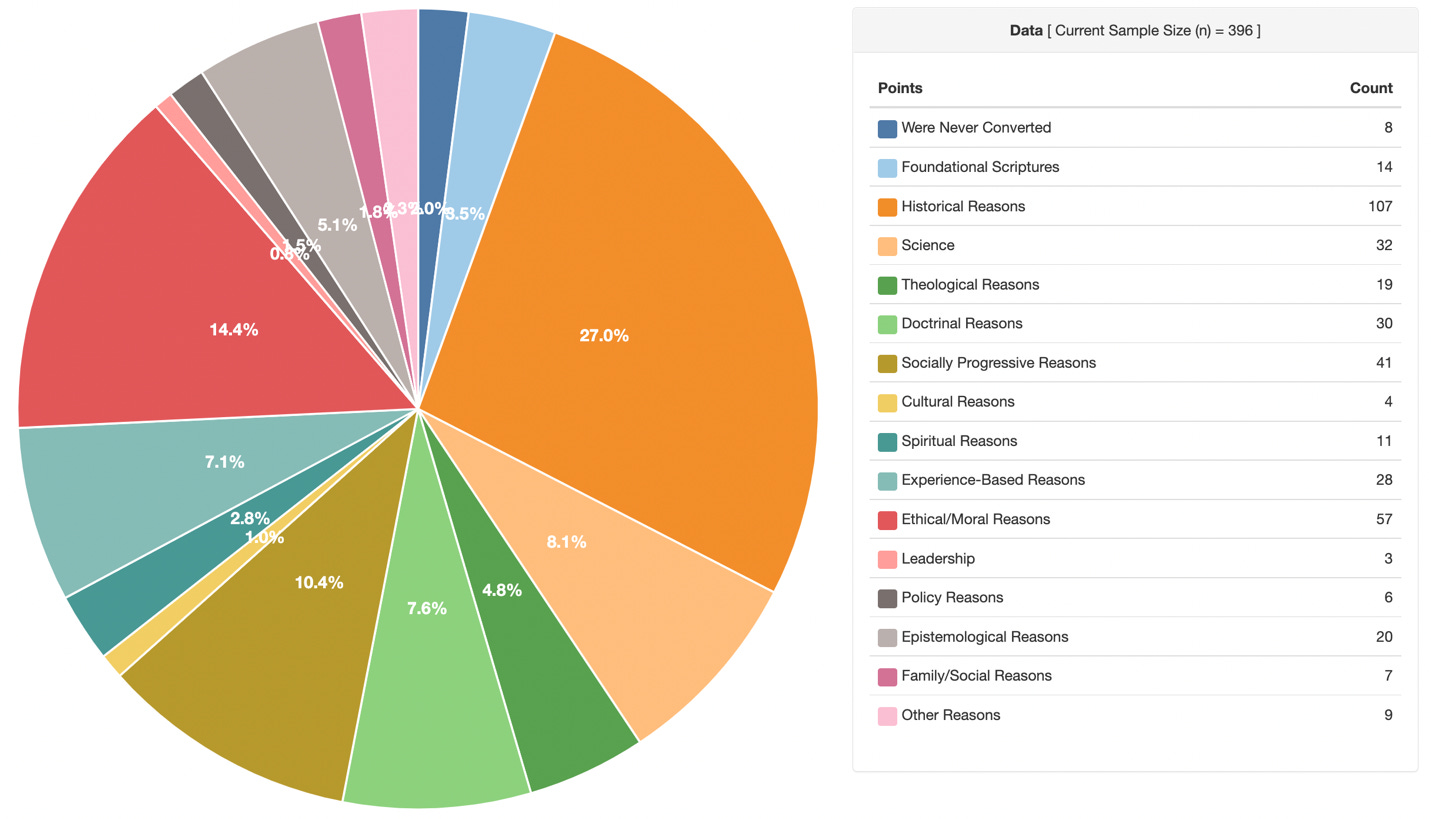

Also I don’t know if I mention this elsewhere, but I am an Ex-Mormon. This seems relevant to note. From ages 7.5 to roughly 11.5, I was a devout believer. I then started to have questions, namely for Epistemological and Theological reasons, although this seems to be on the rarer side among those who leave (can’t find link, dropping a chart below). I’d say I was fully out by the time I started 8th grade. It was a difficult transition.

*END UPDATE*

Suffering-focused ethics

Suffering is the most useful metric for determining your ethical framework.

See Tranquilism by Lukas Gloor at the Center On Long-Term Risk (CLR).

See the video Preventing Extreme Suffering Has Moral Priority by Brian Tomasik. Warning: extremely disturbing video, which includes some of the worst things I've ever laid my eyes on, like a man burning alive.

Anti-Natalism

Because I most value suffering in determining my ethical framework, I think it's wrong to bring beings into existence, unless you can guarantee their non-suffering (which is certainly not possible now).

It has been very good that some beings have existed, because of their efforts to stop suffering. I don't think more beings created now will be of value, because I think we're on the hinge of history.

I have a whole paper written up on why I don't want to have children. If you're interested in reading it, message me!

*UPDATE* 8/31/2023:

I don’t like this anti-natalism label.

I think it’s pretty associated with a deontological and moral realist way of thinking. I am neither of these. I don’t like saying that it is purely “wrong” to bring beings into existence. I hope it’s the case that, because I exist, I’m able to create lots of positive social impact, such that consequentially, it will have been a very good thing that I existed. But if I had a button that I could press to instantaneously end all life in our universe and maybe the multiverse, I probably would? There might be some reasons to not do this, see Evidential Cooperation in Large Worlds (you will probably not understand this very much, that’s OK, more so for the curious).

Obviously, there are many reasons why you would not think about doing this if the death was not instantaneous, or if you had to actually go out and personally do the killing, like dropping nukes or something like that. That seems really bad, like seems very likely to cause more suffering in expectation than you eradicate.)

I think life is useful because it helps other life.

*END UPDATE*

Moral Circle Expansion

Anything that can suffer matters morally at relatively equal levels.

Animals, even insects

Extraterrestrials

Artificial Intelligence

People in the future, even the very far future, especially if we can affect them now

I find this difficult to say, but I think the correlation is actually roughly 1-to-1. Meaning, the death of one chicken is roughly as bad as the death of one person.

I'm pretty sure my main bias against this is that:

I'm a person, therefore biased.

If I behaved like this was true all the time, I would be unbearably sad (it would make animal death even worse than it already is).

Factory farming is really bad. Really, really bad.

The only arguments I have against this 1-to-1 correlation are:

Human capacity for suffering is higher because we're more complex individuals.

After thinking about things for a while, I don't think this argument makes sense. I don't think the killing of a million ants is morally better than the killing of one person, even though a human brain is one million times as large. It seems like, if you squish an ant and it runs wildly in pain, that pain seems to be quite equivocal to human pain. Granted, I don't think I'll ever have to face a decision of whether to kill an ant or a person. I imagine I do pick a person, and I also continue to pick a person if there are 2 ants, but I think the number is much less than a million for total ants.

For a better realization, imagine the pain a dog experiences after you sharply kick it in the ribs with all your might. Does this pain actually look any better than a similar kick to a human?

Humans can reduce suffering for others in a way animals can't.

I think this is probably the best argument. But I imagine most humans are completely neutral in their impact efforts, rendering this argument moot.

However, be wary: moral circle expansion does NOT necessitate the creation of more beings.

*UPDATE* 8/31/2023:

I think the nitty gritty about comparing intelligence / capacity for suffering doesn’t matter very much. The big numbers are useful though: if you think insects can suffer, then they’re urgently important. Even if the number was 1 billion to 1 for insect to person for capacity to suffer, there are 10 quintillion(!!!!!!!!) or 10,000,000,000,000,000,000 insects on Earth in a given moment. That means there are 1.4 billion insects per person. Insects don’t live very long, the median life is maybe a couple days at best, and there are still this many. Insects mattering a billionth as much as people is probably not true. A billionth is a ridiculously small number. It’s probably higher.

*END UPDATE*

Singular cause prioritization

If you want to achieve positive social impact, you have to work on the most important cause that's dictated by your moral framework.

Let me rephrase that: you have to work on the thing you can have the most impact on. If your options look like the following:

Technical alignment work

AI safety community building

Biorisk policy

You pick the thing that allows you to counterfactually reduce the most amount of suffering.

*UPDATE* 8/31/2023:

I don’t like the use of the word have, for the same reasons I’ve stated earlier. I do think that the best world is the one where we all mutually decide to reduce suffering as much as possible though.

There are many people in this world who just, cannot afford to think about social impact first. In fact, there are zero people on Earth who put social impact first in every decision they make—we’re human.

Something is obviously better than nothing. You should strive to do the best you can. But if you can’t, that’s OK too—I’m a moral anti-realist (I don’t think that there are true rights and wrongs, and therefore moral obligations, mainly because right and wrong implies that something created right and wrong). However, if you want to maximize your social impact, to the degree that it’s possible as a human being, prioritization is obviously important. If you reach a state where you care—or at least think that you care—about social impact more than anything else, then upon some reflection, you should find that working on the thing that you think you can affect the most positive change with should actually end up bringing you the most fulfillment. At least, in some sense of the word fulfillment. I don’t like this word very much, but it’s the best I can think of.

*END UPDATE*

Counterfactualism and Expected Value calculations

I'm much warier of EV calculations now than I was when I first entered the EA sphere, but I think they still hold merit if they're quality.

I think counterfactualism is probably the most important component of how I think and operate.

Lexical Sufficientarianism ?

Our ethical goal should be to minimize suffering in the most maximal way possible, which I think looks like prioritizing the worst off first.

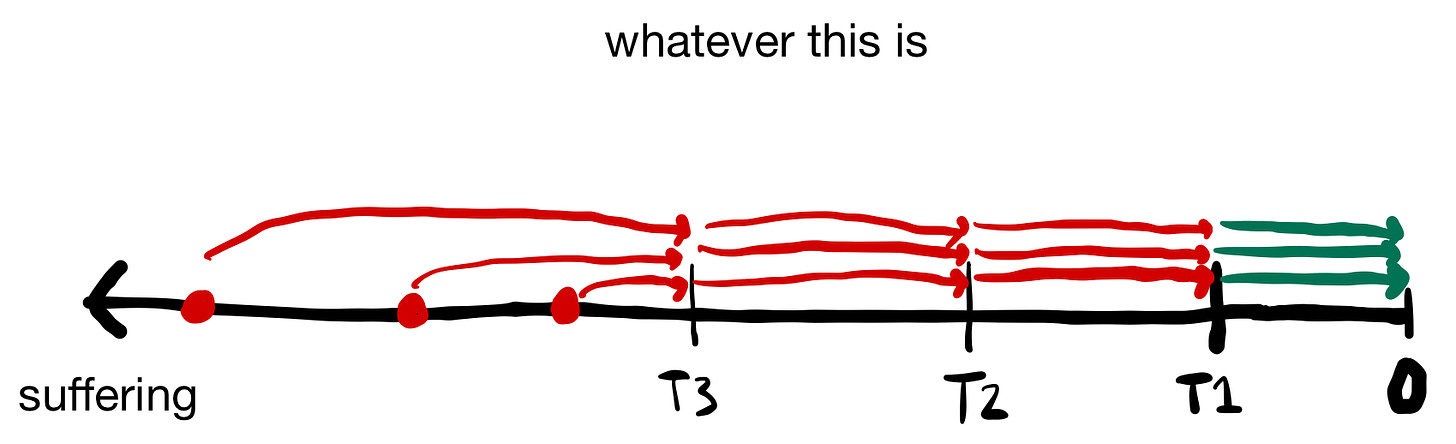

Because I think there are some values that are trade-off-able (I'm willing to suffer through an hour-long line for a roller coaster), I think that absolute negative utilitarianism is probably incorrect? I've spent hundreds of hours thinking about this though and I'm still uncertain. Right now, I'm closest to lexical utilitarianism. I think that the best framework is just to focus on getting those who are suffering the most past a certain lexical threshold, and then leapfrogging from there to get them to a neutral state of sufficiency.

Things could maybe look like this, where we bring people past certain thresholds over time.

I've thought very little about negative preference utilitarianism, and unraveling thwarted preferences. My intuition is that this is wrong way to go about things, because trying to satisfy thwarted preferences seems like it succumbs to utility monsters? I'm hoping to explore this more over the next month.

BUT. There is one rule, which is pretty closely covered in my last blog. If you want to maximize utility, or minimize negative utility, you must be admired for what you're doing. Committing fraud? Not admirable. Robinhood? Gray area. I think the situations where total utility over time is maximized while you're not admired are extremely rare, so much that they're probably impossible to predict.

Once all suffering is eliminated in the universe, I probably still value the increase of hedonistic wellbeing somewhat, but I probably value something else more, which I'll talk about in my final section.

Is it possible to eliminate all suffering in the universe? I don't know. Very likely not, because there is much of the universe outside of our light cone, not even including the multiverse.

Less jargon-y answer: because the universe is constantly expanding due to Hubble's Law, there are areas of the universe we'll never be able to reach due to limitations posed by the speed of light. You can't go faster than the speed of light to get somewhere, and the universe will end before we're able to get to everywhere in the universe, even if we start now!

See The Edges of Our Universe by Toby Ord.

However, maybe it’s possible to acausally affect those outside of our reach? Or maybe faster-than-light (FTL) travel or communication is possible.

See Multiverse-wide Cooperation via Correlated Decision Making by Caspar Oesterheld at the Center On Long-Term Risk (CLR).

*UPDATE* 8/31/2023:

I don’t think I care about raising hedonistic wellbeing at this point at all. I also have no clue how my suffering scale works, like whether I think beings suffering at a more extreme amount are exponentially more urgent than others. I don’t think it matters very much for my day to day thinking, unless I spontaneously decided I should work on stopping cluster headaches. Maybe I should think about this more.

Oh yeah. I also don’t really care about raising hedonistic wellbeing because I think the abolition of suffering is just this? States of pure peace and tranquility are the most ideal. Maybe we bio-hack ourselves and find out there are other things that happen morally, but that seems to beg the problem of desire. I do not know. I doubt this happens in any meaningful way. It does seem possible that other value systems matter due to Evidential Cooperation in Large Worlds, which I already discussed very briefly, but here’s a link again.

*END UPDATE*

Veganism

If you have the physical means to be vegan, you're morally obligated to do so. See my moral circle expansion section for why I think it's so morally demanding.

*UPDATE* 8/31/2023:

I really don’t like how I wrote this. Again, I call something a moral obligation, which is a deontological and moral realist framework. I’m planning on writing a why vegan post soon.

There is a well known ethics researcher, Brian Tomasik, who eats dairy, but avoids driving and general travel in order to not harm insects. He also does not eat rice, in order to not harm frogs and insects in harvest, and similarly doesn’t eat almonds, which use an immense number of bees when farming. There is also some general idea I’ve heard that perhaps eating dairy causes less suffering than avoiding, because perhaps more insects are killed in other types of plant harvest? I don’t know.

While veganism is obviously important in contributing to an animal product free world, and ultimately a world devoid of suffering, I’m not a deontologist. There are many ways I go about my life that almost certainly harm insects, like washing my face. Overwhelmingly, the benefits of veganism are personal:

Extreme mental clarity. You actually fight the cognitive dissonance, and are able to think about things in a way I would’ve never though possible.

Signaling. You’re doing a hard thing, going against the grain. It shows something about who you are, that you’re actually putting your money where your mouth is,

Reminder. Every time you eat, you’re faced with a constant reminder of a decision/promise you made with yourself, for the sake of others. Something almost like Christian sacrament I guess.

Health? Many vegans are not that healthy? Vegans seem to live longer than others in the US, but many vegans don’t think very much about health. Neither do omnivores, or other Americans. I think that, all things equal, the healthiest beings will be those on a perfect whole foods (or similar) plant based diet than an animal one. Probably.

I think the main health benefit for me and most people though is something like, I can’t gorge on Wawa late night, I choose a bean burrito at taco bell instead of a Beefy Gordita Crunch, etc. I’m much more in control of my food and eating habits. When I got my driver’s license and a job in high school, I significantly worsened my eating habits and gained weight by constantly eating out. It’s harder to find high caloric vegan food.

*END UPDATE*

It's OK for morals to be demanding, even infinitely

See the demandingness objection of utilitarianism.

In my opinion, a sign of a good moral framework is that it's infinitely demanding. Ex. Christianity, a non-morally demanding framework, is bad because there is a threshold you can pass where, above it, you don't get any more reward in heaven.

I really don't know how to behave if ethics are infinite.

TLDR; the number of beings in our universe is almost certainly a finite number. BUT, if there exists multiverses, and there is no upper bound on how many might exist, there could be an infinite number. If there are an infinite number of multiverses, that means there are also an infinite number of beings. If your ethical system values utility, anything you do to increase or decrease utility in the world is counterfactually useless and creates no impact (you can't add or subtract from infinity), unless you can somehow acausally (or causally?) affect these beings in other multiverses.

Hedonistic Imperative

There is an imperative to abolish suffering, possibly through biological means.

Hard Determinism

I think holding people accountable for their actions is wrong, and I don't think people have free will. I think people cause harm due to their biological makeup, and the nature of society and influence.

This doesn't mean I like all people, especially those who do bad things! It just means that, if someone who was doing bad things were to stop and sincerely promised to never do bad things ever again, I think that's all it takes to be forgiven. I also don't hold anger against anyone.

And if people do bad things again after promising to not, I again don't hold it against the person for doing so.

As a result, I think prisons are wrong. I could support a system of rehabilitation in today's society, but I think the actual terminal goal is to make committing harm completely undesirable.

*UPDATE* 8/31/2023:

Again, I don’t like the deontological and moral realist use of the word wrong.

There’s some joke about a utilitarian judge that goes something like:

”You’re being sentenced to prison for 30 years. Thank you so much for your service in helping prevent future crimes from being committed".”

*the joke being that, utilitarians don’t care about retributive justice or punishment for its own sake, but some might still value it as a crime deterrent, in which case, the prisoner is doing a great things for society by preventing future crime*

I’m not informed enough to know how well retributive justice works as a crime deterrent. I imagine not very well? I imagine the thing that actually helps the most is not harsher prisons, or maybe even prison rehabilitation, it’s like better sex education and some wealth distribution or something like that. Making bad decisions as undesirable as possible. I am writing a post on this soon.

*END UPDATE*

Secondary

Moral Anti-Realism

I'm probably a moral anti-realist ????? But I don't think it matters that much, as long as we just find the best way to do things.

*UPDATE* 8/31/2023:

I’m a moral anti-realist.

*END UPDATE*

Physicalism

I don't know enough

Constructivism

Most Formal Epistemology

Most Decision Theory

But I'm learning as fast as I can!

Most Logic

That should change when I go back to university.

*UPDATE* 8/31/2023:

I am now officially studying Logic, Information, and Computation! Instead of Physics: Business & Technology.

*END UPDATE*

I don't care

Aesthetics

Others

My personal beliefs/predictions

The future is extremely wild

See exploratory engineering and grand futures and the works of futurists like Eric Drexler and Anders Sandberg.

AI

AI Alignment is the world’s most pressing issue and is overwhelmingly likely to be the most difficult issue we ever face.

AGI timelines look like 2033 or so for the median. Would not be surprised if AI came as early as 2028 or so, but would be very surprised if it came earlier. Would be very surprised if AGI came after 2038.

Unaligned AGI, even "slightly" (whatever this means), will be a bad outcome for sentience.

I also think that nearly all scenarios where we have unaligned AGI lead to suffering catastrophes (s-risk) for other sentience in the universe because of the Cooperative AI problem.

I think it's overwhelmingly likely we get a singularity, an artificial superintelligence, than multiple AGIs at once. Trying to figure out which option is better (even if both are negative) seems to be quite important if you can influence their outcome. Especially if multiple AGIs leads to cooperation conflicts.

I think you should read this report by Anthropic first if you’re more curious.

*UPDATE* 8/31/2023:

Yeah I mostly still agree. Idk maybe I’m a bit bullish, but I don’t think my view is that out of left field. Many have much less ambitious views on when AGI will come, or how transformative it will be. I also don’t like my general lack of Bayesianism, when I say things like “will”.

The majority of the issue I have is with how I just name dropped a bunch of things, kind of out of nowhere. I noticed this when I wrote it, and didn’t bother to fix it. This likely left the reader confused and not willing to take me seriously.

*END UPDATE*

The Meaning of Life

Wikipedia Entry

Copy and paste start

"What is the meaning of life?" is a question many people ask themselves at some point during their lives, most in the context "What is the purpose of life?".

Some popular answers include:

To realize one's potential and ideals

To chase dreams.

To live one's dreams.

To spend it for something that will outlast it.

To matter: to count, to stand for something, to have made some difference that you lived at all.

To expand one's potential in life.

To become the person you've always wanted to be.

To become the best version of yourself.

To seek happiness.

To be a true authentic human being.

To be able to put the whole of oneself into one's feelings, one's work, one's beliefs.

To follow or submit to our destiny.

To achieve eudaimonia, a flourishing of human spirit.

To evolve, or to achieve biological perfection

To evolve, changing from generation to generation.

To survive, that is, to live as long as possible, including pursuit of immortality (through scientific means).

To live forever or die trying.

To maximize one's genes' advantage in terms of natural selection, by having many children or indirect descendants via relatives.

To replicate, to reproduce. "The 'dream' of every cell is to become two cells."

To seek wisdom and knowledge

To expand one's perception of the world.

To follow the clues and walk out the exit.

To learn as many things as possible in life.

To know as much as possible about as many things as possible.

To seek wisdom and knowledge and to tame the mind, as to avoid suffering caused by ignorance and find happiness.

To find the meaning or purpose of life.

To find a reason to live.

To resolve the imbalance of the mind by understanding the nature of reality.

To do good, to do the right thing

To leave the world as a better place than you found it.

To do your best to leave every situation better than you found it.

To benefit others.

To give more than you take.

To end suffering.

To create equality.

To challenge oppression.

To be generous.

To contribute to the well-being and spirit of others.

To help others, to help one another.

To take every chance to help another while on your journey here.

To be creative and innovative.

To forgive.

To accept and forgive human flaws.

To be emotionally sincere.

To be responsible.

To be honorable.

To seek peace.

Meanings relating to religion

To reach the highest heaven and be at the heart of the Divine.

To have a pure soul and experience God.

To understand the mystery of God.

To know or attain union with God.

To know oneself, know others, and know the will of heaven.

To love something bigger, greater, and beyond ourselves, something we did not create or have the power to create, something intangible and made holy by our very belief in it.

To love God and all of his creations.

To glorify God by enjoying him forever.

To spread your religion and share it with others.

To act justly, love mercy, and walk humbly with your God.

To be fruitful and multiply.

To obtain freedom. (Romans 8:20–21)

To fill the Earth and subdue it.

To serve humankind, to prepare to meet and become more like God, to choose good over evil, and have joy.

[He] [God] who created death and life to test you [as to] who is best in deed and He is Exalted in Might, the Forgiving. (Quran 67:2)

To worship God and enter heaven in afterlife.

To love, to feel, to enjoy the act of living

To love more.

To love those who mean the most. Every life you touch will touch you back.

To treasure every enjoyable sensation one has.

To seek beauty in all its forms.

To have fun or enjoy life.

To seek pleasure and avoid pain.

To be compassionate.

To be moved by the tears and pain of others, and try to help them out of love and compassion.

To love others as best we possibly can.

To eat, drink, and be merry.

To have power, to be better

To strive for power and superiority.

To rule the world.

To know and master the world.

To know and master nature.

To help life become as powerful as possible.

Life has no meaning

Life or human existence has no real meaning or purpose because human existence occurred out of a random chance in nature, and anything that exists by chance has no intended purpose.

Life has no meaning, but as humans we try to associate a meaning or purpose so we can justify our existence.

There is no point in life, and that is exactly what makes it so special.

One should not seek to know and understand the meaning of life

The answer to the meaning of life is too profound to be known and understood.

You will never live if you are looking for the meaning of life.

The meaning of life is to forget about the search for the meaning of life.

Ultimately, a person should not ask what the meaning of their life is, but rather must recognize that it is they themselves who are asked. In a word, each person is questioned by life; and they can only answer to life by answering for their own life; to life they can only respond by being responsible.

Copy and paste end

Copy and paste start

From Anders Sandberg, on what the meaning of life could look like in the far future

Survival: humanity survives as long as it can, in some form.

"Humble futures": humanity survives for as long as is appropriate without doing anything really weird. People have idyllic lives with meaningful social relations. This may include achieving justice, sustainability, or other social goals.

Gardening: humanity maintains the biosphere of Earth (and possibly other planets), preventing them from crashing or going extinct. This might include artificially protecting them from a brightening sun and astrophysical disasters, as well as spreading life across the universe.

Abolishing suffering: humanity finds ways of curing negative emotions and suffering without precluding good states. This might include merely saving humanity, or actively helping all suffering beings in the reachable universe.

Happiness: humanity finds ways of achieving extreme states of bliss, contentment, meaning, or other positive emotions. This might include local enjoyment, or actively spreading minds enjoying happiness far and wide.

Deep thought: humanity develops cognitive abilities or artificial intelligence able to pursue intellectual pursuits far beyond what we can conceive of in science, philosophy, culture, spirituality and similar but as yet uninvented domains.

Posthumanity: humanity deliberately evolves or upgrades itself into forms that are better, more diverse or otherwise useful, gaining access to modes of existence currently not possible to humans but equally or more valuable.

Creativity: humanity plays creatively with the universe, making new things and changing the world for its own sake.

Copy and paste end

Why copy and paste all this stuff?

There are many, many ways that people wish to live their lives. Think very carefully about how you want to live yours.

In the far future, if suffering is completely abolished somehow, then I'd hope to use scientific inquiry to understand the nature of the universe.

Where did we come from? Why are we here? Etc.

(probably) More to come in the future about my confusion on why people don’t optimize for life goals that have infinite gain.

Afterword

I did not want to write another long blog post, but I did anyways! Sorry! This one ended up being a little over 6,000 words of my own material and an additional ~2,000 words copied and pasted. I thought about splitting up the blog into multiple posts, but it seemed to make sense to keep it all together. This took me close to 40 hours of time (hopefully I’ll get more efficient as I keep going!!! ……). I’m currently uploading this at 4:45am—there are bound to be errors. I’ll do some quick reads in the coming days, but I wanted to get this OUT.

I'm hoping to get out 2-3 blog posts in the next week to make sure I'm still on track for my 50 blogs this year goal.

*UPDATE 8/31/2023*:

Yeah obviously I am not on track for this goal! Whoops. But I do hope to get something out soon, now that I’m back in school. Also, there are definitely more than 6,000 words now, but I’m too lazy to check the word count.

*END UPDATE*

Feel free to leave comments or DM me on Twitter @brandonsayler! If you don’t have Twitter, you can also email me at brandon(dot)sayler@gmail(dot)com, or you can try your luck finding me on Facebook Messenger.

I really like this post! It's nice to have your views spelled out so explicitly. I found myself agreeing with a lot of this and pretty confused about other bits. Some of the things that I most want answers/clarification for:

_______________________________

"I think atheism is the most important first step to take if you're trying to help the world. I'm an antitheist."

This seems like a very strong statement. Surely one could believe in some deistic god/the "god of philosophers" and this wouldn't affect their moral views very much! Also, if no god implies moral anti-realism, then it seems like becoming an atheist would NOT be an important step towards helping the world because "helping the world" is no longer a meaningful concept. Maybe I am misunderstanding the realism-anti realism debate though.

____________________________

"I have a whole paper written up on why I don't want to have children. If you're interested in reading it, message me!"

I have some intuitions against antinatalism, so I am very interested in reading this!

[comment got cut of; continued in the reply]