What it means to be a hero

The dictionary defines heroism as great bravery, but it defines a hero as a person who is admired or idealized for courage, outstanding achievements, or noble qualities. These are actually quite different. Heroism doesn’t require admiration. And being a hero doesn’t necessarily require bravery.

I’d like to redefine a hero here as someone who tries to achieve social impact in a way others admire. This could be in a positive or negative manner: after all, there are many examples of heroes who are actually trying to do something bad but are admired in a heroic manner instead of a villainous one (take Eric Harris and Dylan Klebold’s enshrinement by other school shooters). I also think that you don’t actually have to achieve social impact in order to be considered a hero: the mother who goes back into the house on fire to save her children, but dies in the fire and also doesn’t save her children, is still considered a hero. I also don’t think that a hero has to be courageous to be defined as a hero, but you do probably have to appear to be brave.

I doubt Superman is ever afraid of a fight (unless kryptonite is involved), but his acts are still considered fit for a hero.

There’s something else that’s missing from this definition though. Overwhelmingly, being a hero requires some form of self-sacrifice. You must put yourself in immense danger, work an insane number of hours for low pay, not enjoy nice things, not see your family and friends for extended periods of time, etc. They often “do the thing” even though they don’t want to.

This is why US Army Soldiers are considered heroes and billionaire philanthropists like Bill Gates are not—or at least, he’s not considered a hero by the overwhelming majority of the population.

Bill Gates is still (mostly) admired for his philanthropic acts, as well as his aid in the creation of Microsoft, but he’s not really considered a hero for these. His self-sacrifice seems somewhat forced: he has worked an insane number of hours (for insanely high pay), he has probably spent a number of hours not seeing his family and friends, and he’s given up “much” of his fortune, but Gates could stop at pretty much anytime and be just as well off as he once was. It’s also hard for the layperson to measure the impact that Gates has on the world, so we don’t really care that we lose him there.

Even though most people join the US Army for the benefits, the common perception is that people join the Army for patriotic reasons—that most soldiers are putting their lives on the line because they want to protect the American people, not because they want free college, long pensions, and job stability. There is obviously still self-sacrifice here due to the danger, not being able to see family, having to opt into a work contract, earning relatively low pay, etc. but I imagine if the average American knew the reasoning most people join the military, the public perception of their heroism would diminish. If I had to guess, this is probably the secondary reason cops are viewed as less heroic now (behind police brutality).

I can’t find any statistics, but I imagine patriotism trends from greatest to least: Marines, Army, Navy, Air Force, Coast Guard. Why? For the layperson, I think this is the order of prestige for each branch. This is also the length from longest to shortest for boot camp, which is also the generally recognized consensus for which boot camps are the most difficult. I think the most patriotic enlisters are likely to do whatever it takes to protect their country, hence being more likely to join the Marine Corps than the Coast Guard.

Anecdotal: I talked to a Marine Corps recruiter in high school when I was vaguely thinking about joining the Marine Corps Band to play saxophone, and we did a test to figure out if my values *aligned* with the Marine Corps. Surprise: they did not! People that join the Marines tend to value patriotism, brotherhood, and overcoming fear much more than other branches according to him. So, he recommended I go talk to a Navy or Air Force recruiter instead hahaha.

I guess this above paragraph mostly fits in with this “in a way others admire” clause, but I felt it needed a deeper explanation.

What it means to be a counterfactual hero

Counterfactualism is the method used to determine how much impact something has actually had on the world. How does the world look different because you or I exist? If we were to have two worlds, one where you or I existed and one where you or I didn’t, how would these worlds differ?

If someone has a heart attack in front of me and their heart fails, and I step in, perform CPR, and save their life, I’d be a hero. However, if there were other people around me, it’s likely someone else would've stepped in (bystander effect aside) if I hadn’t and performed CPR instead of me. In this scenario, I don't have any counterfactual impact.

Who has the impact then? Well, you'd still get some "regular impact" points. There would just be no counterfactual impact points generated.

There are some arguments (ie Shapley Values) that argue we should divvy up impact points so that every n person in the vicinity that can and will perform CPR gets 1/n of the impact, but I think this is wrong—even if the person who actually ends up doing the impact gets more than 1/n of the impact.

Maybe I broke a few ribs while bringing this person back to life, but if I had let someone else step in, they would’ve avoided the rib breaking and brought them back to life. My counterfactual impact would be negative because I caused harm by trying to affect change. It'd be even worse if I completely failed to save this person's life, granting that it would've been saved if I had allowed more competent personnel in the room to volunteer first.

Here, you'd still get "regular impact" points, and they’d be just about equal to the regular impact points you get from not breaking ribs. Unless you end up killing someone—you’ll probably still earn a few “regular impact” points, but significantly less than if they’d lived.

We'll say a counterfactual hero is someone who achieves critical social impact in a way others admire.

I'm not even sure if the use of "critical social impact" means anything in real life, but I mean critical as a substitute for counterfactual.

You're still a hero if you step in and save the person's life, even if you crack a few ribs. In fact, you're likely still a hero even if you step in and they die, because you tried to achieve social impact. But you are not a counterfactual hero.

Although I could imagine a situation in which an overconfident brute shoves others aside to perform CPR and the person ends up dying, in which case you’d get hailed as a villain.

Strange how the line between the two is so faint.

We can extend the concept of counterfactualism elsewhere too. Think of Albert Einstein and his many discoveries: General and Special Relativity, the Photoelectric Effect, Brownian Motion, Mass-energy equivalence, Planck-Einstein relation, Einstein field equations, Bose-Einstein statistics, Bose-Einstein condensate, Einstein's cosmological constant, Unified Field Theory (UFT), ensemble interpretation of quantum mechanics... etc etc.

If Einstein never existed, these discoveries would've almost certainly been made at some point along the way. So, Einstein's impact is only how much impact these discoveries had on the world in which he exists, versus the world where he does not exist and these discoveries come later.

We can toy with this thought and say that perhaps the discovery of mass-energy equivalence (E=mc^2) by Einstein was counterfactually negative, possibly negative enough to negate all the time that was bought with his other discoveries. After all, it was this knowledge that Einstein surmised that a nuclear bomb could be created. Einstein then warned President FDR during WWII to begin the Manhattan Project, which thanks to Einstein's credibility, the US did. This not only led to the attacks on Hiroshima and Nagasaki, but also post-war worldwide turmoil via nuclear escalation and the Cold War. Einstein said, "had I known that the Germans would not succeed in developing an atomic bomb, I would never have [warned FDR]."

However, you can keep playing this game and say if mass-energy equivalence was discovered later by someone else they could've shared this with someone who desired more harm! But it seems unlikely this is the case because we started the Manhattan Project during wartime: an equivalent thing likely would not have been started during peacetime, which is usually the state great powers are in.

But who knows? I'm spending too much time speculating here. You get the point.

Oh yeah. I’m not actually sure if Einstein is a hero or not, just in general. When I think Einstein, I don’t think “hero”, but I’m sure there are many (especially physicists and other scientists) who look up to him as a hero. I’m just using him as an example of regular counterfactualism.

Let’s look back at a counterfactually negative example of Eric Harris and Dylan Klebold. If they don’t exist, Columbine certainly doesn’t get shot up, and possibly school shootings don’t become nearly as sensationalized as they are today. It seems to be much easier to have a counterfactually negative impact than a counterfactually positive impact.

It actually seems really hard to have positive counterfactual impact, or at least to know whether or not the impact you’ve achieved is truly counterfactually positive.

We can also look at Bill Gates, who is certainly (>95%) having a positive counterfactual impact on the world through his philanthropic efforts, but he still isn’t labeled a hero. So, achieving counterfactual impact still doesn’t demand the hero label.

Pretty much all heroes in literature, history, and present-day are your run-of-the-mill hero. It's quite unclear as to whether or not any counterfactual heroism is achieved.

Historic figures that maybe make this bar:

Abraham Lincoln. There was probably no one else who could've done the job as president of the US as well as he did during the Civil War. Although, even in the case that the Union loses the war to the Confederacy in the Civil War, I'm confident that people eventually come around and reverse the horrific practice of enslaving people.

Alan Turing. Without his work, the Allies could've very well lost to the Axis powers during WWII. Surely no one else would've figured out how to crack code as well as Turing.

Literary figures that maybe make this bar:

Harry Potter. Without Harry Potter, I don't think Voldemort ever gets killed (after all, that is what the prophecy says?). But I guess if not Harry, then Neville is Voldemort's adversary, and if he's killed, eventually someone else likely steps up and saves the day.

Sorry if you haven’t read Harry Potter and don’t get this analogy!

What it means to be a utility maximizing hero (and a negative utility minimizing hero)

We can go a step further than this though.

Let’s take Abraham Lincoln. Let’s compare Lincoln in our world to a world in which Lincoln makes better executive strategies in the Civil War to cause less death. Or maybe a smarter Lincoln decides to avoid the theatre less than a week after a tumultuous war ends, which saves his life and leads to Reconstruction having much better results. Or maybe Lincoln uses his genius to make incredible medical breakthroughs instead of leading the United States (I have no idea if this is more useful or not).

You can keep playing this game until you get to the point where you don't get any more utility. You've created a world where Lincoln precisely behaves in a way that he creates the most amount of wellbeing for others.

I have no clue what actions lead to Lincoln creating the most amount of wellbeing, but there is a chain of actions that leads to a world where this happens. In fact, there are probably multiple chains of actions that lead to a world where this happens (a world where Lincoln avoids using the restroom for 5 mins longer than another world, these worlds look the same).

So we'll say a utility maximizing hero is someone who critically maximizes the most amount of critically positive utility (wellbeing) that they can in a way others admire. Conversely, we'd say a negative utility minimizing hero is someone who critically reduces the most amount of negative utility (suffering) that they can in a way others admire. We could also apply this to the villainous arc we’ve been talking about by defining a negative utility maximizing hero or a utility minimizing hero.

If you’re unfamiliar with the word utility, you can read more in the first section here.

There’s probably no one in the history of the world that has ever been completely utility maximizing. Even Jesus could’ve spent much more time healing the sick and wounded than he did (one of the reasons I find the perfection argument of Jesus weird)1.

However, there are definitely counterfactual heroes (and non-heroes) that exist that have maximized more utility than others or have pivoted their actions to maximize more utility than they would have otherwise if they had stayed on their course.

Why am I spending all this time talking about heroism?

For a number of reasons. Hopefully I can explain them well enough.

I think that a lot of people in the world are actually optimizing for being a hero, instead of optimizing for positive counterfactual impact. I also think that there are many reasons why you should optimize for being a utility maximizing hero, instead of just a utility maximizing person, for pseudo rule utilitarianism reasons. I also think that there are many reasons why you should not idolize heroes.

Hero optimization for the wrong reasons

There are people who optimize for being a hero without any care for impact, or at least that isn’t their first goal. They pursue “impact” but really only for prestige, money, fame, etc.

And I’m not talking about this “it’s impossible to be truly altruistic” claim—although to be fair, I don’t think this claim is true.

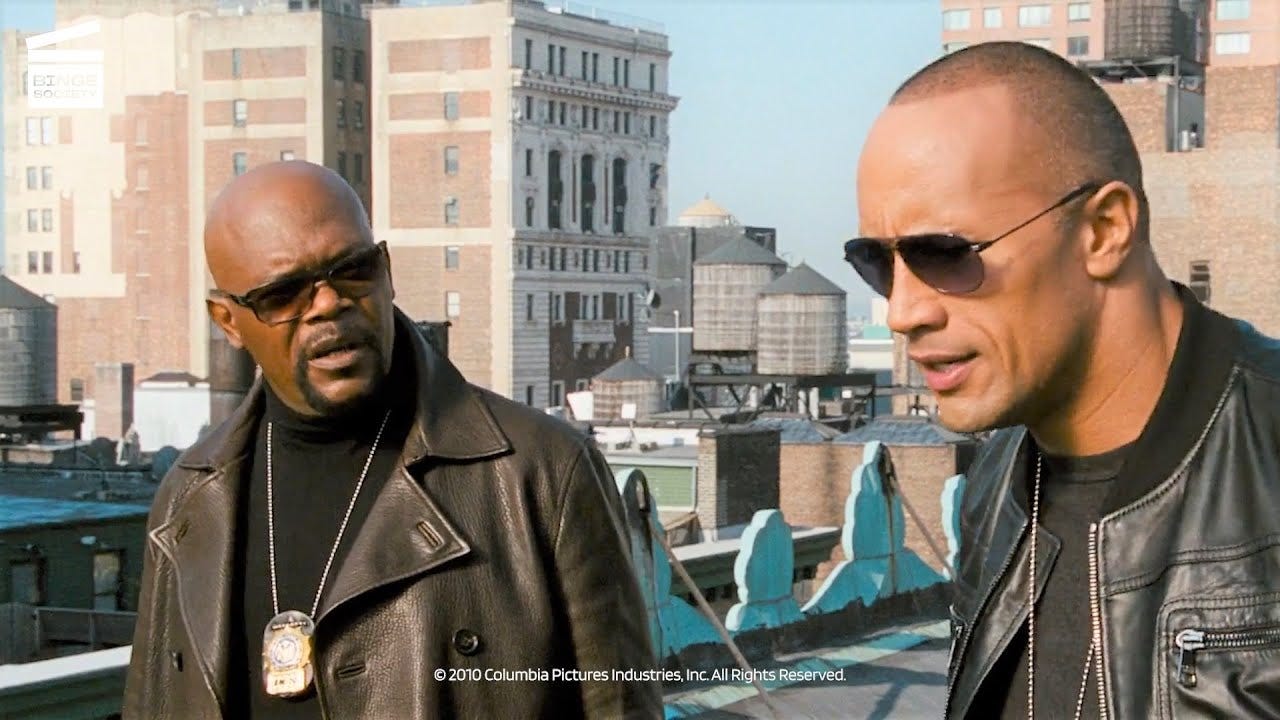

I think of the movie The Other Guys, starring buddy cops Mark Wahlberg and Will Ferrell, although the movie originally starts out with buddy cops Dwayne “The Rock” Johnson and Samuel L. Jackson. In one of the first scenes, there’s a high-profile chase in which The Rock and Jackson go on a high-speed criminal chase in New York City and end up causing a lot of havoc and damage unnecessarily (and I’m pretty sure most of the criminals actually get away because of this). They’re hailed as heroes anyways, and admired all across the city, due to their bravado and charisma. The thing is, that The Rock and Jackson don’t care at all about the impact they’re having. They just want to be celebrity heroes.

In the real world, I think there are lots of people like this: cops, celebrity activists, scientists and researchers, doctors, politicians, etc.

To be fair, I don’t think that all people working in these occupations behave this way. But I think a large number do.

However, I’m most concerned about trends I’ve seen in the Effective Altruism Community. I fear that there are a number of people in the movement who are optimizing for heroism, prestige, etc. instead of counterfactually positive impact.

There are multiple ways this happens:

With pride, people aimlessly hope to assume technical roles (like work in technical AI safety) because they want to be heroes, but they don’t have the technical chops to make counterfactual impact here (because it's very difficult to!), and would be better off doing operations or movement building or focusing on another cause area.

Keep in mind: the best heroes almost always require role players, and without them, their impact would never be achieved! Role players supply counterfactual impact that key heroes can’t achieve alone.

People assume roles like work in technical AI safety, because they want to be heroes, and not because they care about impact. Or possibly they care somewhat about impact, but heroism takes precedence, or even the other way around. Heroism isn’t always the rooted thing here: it could be prestige instead that they’re optimizing for. Why is this bad?

It means they’re impact agnostic. They happen to think that solving the alignment problem is the thing that’s most heroic, the thing that will bring them the most prestige. This might mean spending resources on making sure they are the ones that solve the alignment problem.

It means they might work on an alignment solution they personally think isn’t promising, but others do, because this might be the best way to maximize their prestige.

This could also look like seeking work at places like Anthropic or other organizations viewed as the most prestigious or heroic.

They’re less likely to stay in AI safety and could pivot to working on AGI capabilities research if they’re naive enough to think that they can safely create AGI, because this would maximize their “heroism points” if they succeed.

I imagine this is some of Sam Altman’s reasoning for working on AI capabilities as CEO of OpenAI.

People spend lots of time doing things like “climbing the EA social ladder”, or other movement social ladders, which might be somewhat useful for networking purposes, but only to a point. The value of this does keep increasing though if you want people to think of you as a really good and heroic person.

Utility Maximizing Hero

Rule Utilitarianism

If I just said heroism is bad, why do I think more people should think about it? Pretty much entirely because of the “in a way others admire” clause.

Rule utilitarianism is a form of utilitarianism that says an action is right as it conforms to a rule that leads to the greatest good, or that "the rightness or wrongness of a particular action is a function of the correctness of the rule of which it is an instance".

For rule utilitarians, the correctness of a rule is determined by the amount of good it brings about when followed. In contrast, act utilitarians judge an act in terms of the consequences of that act alone (such as stopping at a red light), rather than judging whether it faithfully adhered to the rule of which it was an instance (such as, "always stop at red lights"). Rule utilitarians argue that following rules that tend to lead to the greatest good will have better consequences overall than allowing exceptions to be made in individual instances, even if better consequences can be demonstrated in those instances.

Here, I think the rule that should probably be adopted is this “in a way others admire”.

Let’s look back at Bill Gates. I said before that, although there are some people that admire Bill Gates’ philanthropic efforts, a majority don’t care at all. Why is that? Well, liberals and the left don’t like billionaires, and conservatives and the right think that Bill Gates is a liar trying to rule the world. The world in which Bill Gates gives away more of his wealth before amassing lots of it, or does a better job giving away his wealth than he’s currently doing, and is also more open and is involved in less shady things like being friends with Jeffery Epstein, is a better one. The world where Bill Gates is admired more is one where he’s forced to do more good to do so. Being perceived as good also lets him do more good.

And of course, let’s look at Sam Bankman-Fried. A majority of Americans did not admire SBF’s actions2. For many reasons:

Billionaires bad

Or at least, this was by far the most popular opinion on this behavior.

Seems improbable that someone’s actual motive is “earning to give” (note: his motive very likely was not earning to give!!)

How SBF could’ve been a hero instead:

Given his money away better/faster

Like, really fast.

Done something entrepreneurial that was actually good for the world

Especially with earning to give, it seems hard to appear like a hero.

But I think this also holds true for something like AI alignment. It’s difficult to convince the general public that working on this is a heroic act.

It actually seems pretty easy to convince the layperson that AI could cause lots of problems if developed incorrectly. But, their minds mostly think about military AI and AI Ethics, less so Supertintelligence.

In fact, many alignment researchers (and longtermists) are villainized on Twitter by people like Timnit Gebru and Émile Torres. Much work should be done to convince the general public that this is something actually good that should be done.

I’m not saying this is an easy task. It’s something that I’m trying to figure out how to fix. Hopefully more will come out soon from me.

Think Bigger

If you have a goal in life to have positive social impact, your goal might look like one of the following:

I want to attempt to have some amount of positive impact on the world.

I want to have some amount of positive impact on the world.

I want to have a job that produces some amount of positive impact.

My main goal in life is to have a positive impact on the world.

My only goal in life is to have a positive impact on the world.

I want to have some amount of counterfactually positive impact on the world, maybe via a job.

My main goal in life is to have a counterfactually positive impact on the world.

My only goal in life is to have a counterfactually positive impact on the world.

My main goal in life is to have the most counterfactually positive impact on the world I can.

My only goal in life is to have the most counterfactually positive impact on the world as I can (ala utility maximizing).

And there are lots of variations on these, but this is just a basic list. Most people in the world that fall under this umbrella fall in the first 3 of the list.

Effective Altruism was originally:

My main goal in life is to have a counterfactually positive impact on the world.

My only goal in life is to have a counterfactually positive impact on the world.

My main goal in life is to have the most counterfactually positive impact on the world I can.

And hypothetically it still is. But now you also have people here:

I want to have some amount of counterfactually positive impact on the world, maybe via a job.

And people who think they are here:

My main goal in life is to have a counterfactually positive impact on the world.

My only goal in life is to have a counterfactually positive impact on the world.

My main goal in life is to have the most counterfactually positive impact on the world I can.

But are actually here:

I want to be a hero.

I want to be a counterfactual hero.

OR:

I want to have some amount of counterfactually positive impact on the world, maybe via a job.

Many people take an EA job because of reason 6, when in reality, they actually believe in reasons 7 or 8, and they choose a random EA job because they defer to authority too much. Not all EA jobs are created equally.

There are also many in the movement who are likely here for:

I want to solve challenging problems.

I want prestige.

The common rebuttal here is that working on AI safety is not that prestigious, especially compared to other computer science jobs like MANGA. But I’d argue that a job at OpenAI is already more prestigious than any MANGA job. I’d also argue that, if you think alignment is an issue, then solving it is surely the most prestigious thing you can do.

Similar to work on climate change or pandemic prevention.

I think x-risk is bad, but I’m generally not an altruistic person. Ex. I want to work on x-risk reduction because of fears I have about how it affects myself and/or my family.

I’d like to talk more about this point in a later post. I’m pretty sure this is a controversial take.

Which are just as harmful as the reasons I listed for hero optimizing being bad.

More people in EA should not be afraid of trying to be as ambitious as possible to improve the world, as a utility maximizing hero, as long as you’re admired for what you’re doing. If you’re not admired, you probably are no longer utility maximizing! If this is your goal, think very carefully about how you go about this! Many who try to maximize utility fail, possibly because they forgo this hero rule and other rules that seem positive.

When I say fail, I mean sometimes even creating counterfactually negative utility! Again, it is much easier to cause counterfactual harm than counterfactual benefit!

No more Hero idolizing

I saw many EAs have stickers on their laptops that said “What would SBF do?” before SBF blew up.

Idolizing people makes it much harder to later retract your idolization.

It seems easier in the case of someone like Bill Cosby: nobody(?) could’ve imagined Bill Cosby as a serial rapist until the news came out. There were definitely warning signs from someone like Kanye West though. There were also definitely warning signs from SBF:

I knew his Bahamian estate was quite massive before the news came out. I also really did not like his use of money in the Carrick Flynn campaign.

It seems really bad for people to be idolized in the EA community. People like Will MacAskill and Nick Bostrom (who seem to already have warning signs in my opinion), but also others like Peter Wildeford, Rob Wiblin, and Dustin Moskovitz that people generally view positively. Widespread idolization in the community makes it really difficult to separate the community from these people if they end up behaving poorly in the future.

Conclusion

That’s it! I don’t have much to say except that I wrote a conclusion during my first upload but didn’t save it anywhere, so this conclusion will look vaguely different.

This took a while to write: it’s over 4,400 words! My goal this year is to write 50 blog posts, about one a week. This will probably end up being one of the longer posts. If you’d like to stick around for the next 49, please consider subscribing to my mailing list! I promise it will be active. My promise has failed! I hope progress continues though. We make progress by having the will and courage to keep trying. So I gotta keep trying.

Feel free to leave comments or DM me on Twitter @brandonsayler! If you don’t have Twitter, you can also email me at brandon(dot)sayler@gmail(dot)com, or you can try your luck finding me on Facebook Messenger.

To be clear, I’m a strong atheist, but I’m also an Ex-Mormon. More on this another time.

I don’t have any statistics to support this, but this seems obviously true to me.

Great piece Brandon! Made me introspect on my intentions in EA. Looking forward to the next 49.